This article applies as of PRTG 22

SNMP HPE ProLiant System Health sensor and the "Error in overall status" after PRTG update

With the update to PRTG 14.4.12, we changed the method that the SNMP HPE ProLiant System Health sensor uses to determine the Overall Status channel. A new OID checks for more possible error cases, so the sensor now detects errors that it could not show before. This is the reason why you might suddenly see the Down status for your HPE ProLiant servers after updating PRTG. PRTG works as expected and correctly reports that there is something wrong.

Check the detected error on your HPE server

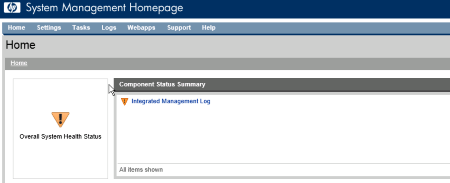

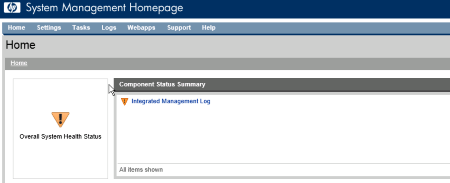

Log in to the web interface System Management Homepage of your HPE Server. On top of the Home page, you see an indicator that shows you that your system has a problem. Next to it, you see the Component Status Summary that shows you which parts of your system are affected.

Here is an example that shows an error in the Integrated Management Log (IML):

Click to enlarge.

Troubleshooting solutions

Option 1: Correct the error on your HPE ProLiant server

After reading the log and correcting the error, you have to clear the IML from the command line:

- Get access to the logs:

ipmiutil sel -N [Host IP Address] -U [User Name] -P [Password] -F HP

- Clear the logs:

ipmiutil sel -d -V 4 -N [Host IP Address] -U [User name] -P [Password] -F HP

- Delete the content of IML:

"C:\Program Files\Compaq\Cpqimlv\cpqimlv.exe" /export:"c:\iml.txt" /clear

Option 2: Change the sensor's behavior in PRTG

Note: With the following instructions you configure your HPE sensors to ignore the status information that HPE gives you.

2a: Deactivate checking the overall status

You can deactivate checking the overall status so that the respective channel does not show the Down status at all.

Click the Overall Status channel to open the channel settings. Under Lookup, select None to completely disable alerting for this channel.

2b: Use an alternate lookup file for the sensor

If you do not want to have a rigorous Down status for this sensor using the new OID, you can redefine the sensor behavior by selecting an alternate lookup file.

Click the Overall Status channel to open the channel settings. Under Lookup, select the lookup file prtg.standardlookups.hp.statuswarning.ovl. The default lookup file is prtg.standardlookups.hp.status.ovl.

2c: Customize the default lookup file for the sensor

You can also redefine the sensor behavior in the respective default lookup file that is used for the sensor's Overall Status channel.

- Go to the \lookups folder in your PRTG program directory and copy the file prtg.standardlookups.hp.status.ovl into the \lookups\custom folder.

- Open your copy in an editor.

- In the lookup definition, change state="Error" to state="Warning" and save your settings.

- This will cause the sensor to show the Warning status instead of the Down status if your HPE hardware reports an error.

- As long as your custom file exists, PRTG prefers your customizations to the original lookup settings.

- For details, see PRTG Manual: Define Lookups, section Customizing Lookups.

Note: In PRTG 15.x.16 through 18.1.37, there was an IML status channel. As of PRTG 18.1.38, this channel no longer exists.

Add comment